The gap between the audit trail people think they have and the audit trail they actually have is large enough to be a category error. Application teams ship AI features and assume that logs, dashboards, and database history will be enough to answer post-hoc questions. Then a regulator, an inspector general, an internal audit team, or a counterparty in a contract dispute asks a specific question — what was the prompt sent to the model when it made this recommendation on November 14? what context did it retrieve? what was the confidence score? was that the production version of the prompt template, or was the experiment still running? — and the engineering team spends a week trying to reconstruct the answer from logs scattered across four systems. Sometimes they get a defensible answer. Sometimes the answer is “we don’t know.”

We have been building software for regulated industries long enough — nuclear, fintech, healthcare, energy — to have seen both versions of this conversation play out, even before AI entered the picture. The version where the engineering team can pull the answer in five minutes from a single durable source is dramatically different from the version where they cannot. The difference is rarely about the diligence of the people involved. It is about what was built.

As we have moved more of our work into AI engineering — and as the regulatory frameworks around high-consequence AI have started to mature — we have settled on an architectural pattern that we think gives the right foundation for the next generation of this work. This post is about two architectural patterns that, in combination, give you audit traceability by construction rather than by retrofit. The patterns are durable workflow orchestration (Temporal is what we use) and immutable data architectures (event-sourced state, content-addressed inputs, append-only event stores with cryptographic chains). Neither pattern is new. Their combination, deliberately applied to AI workflows, is what we are building on now.

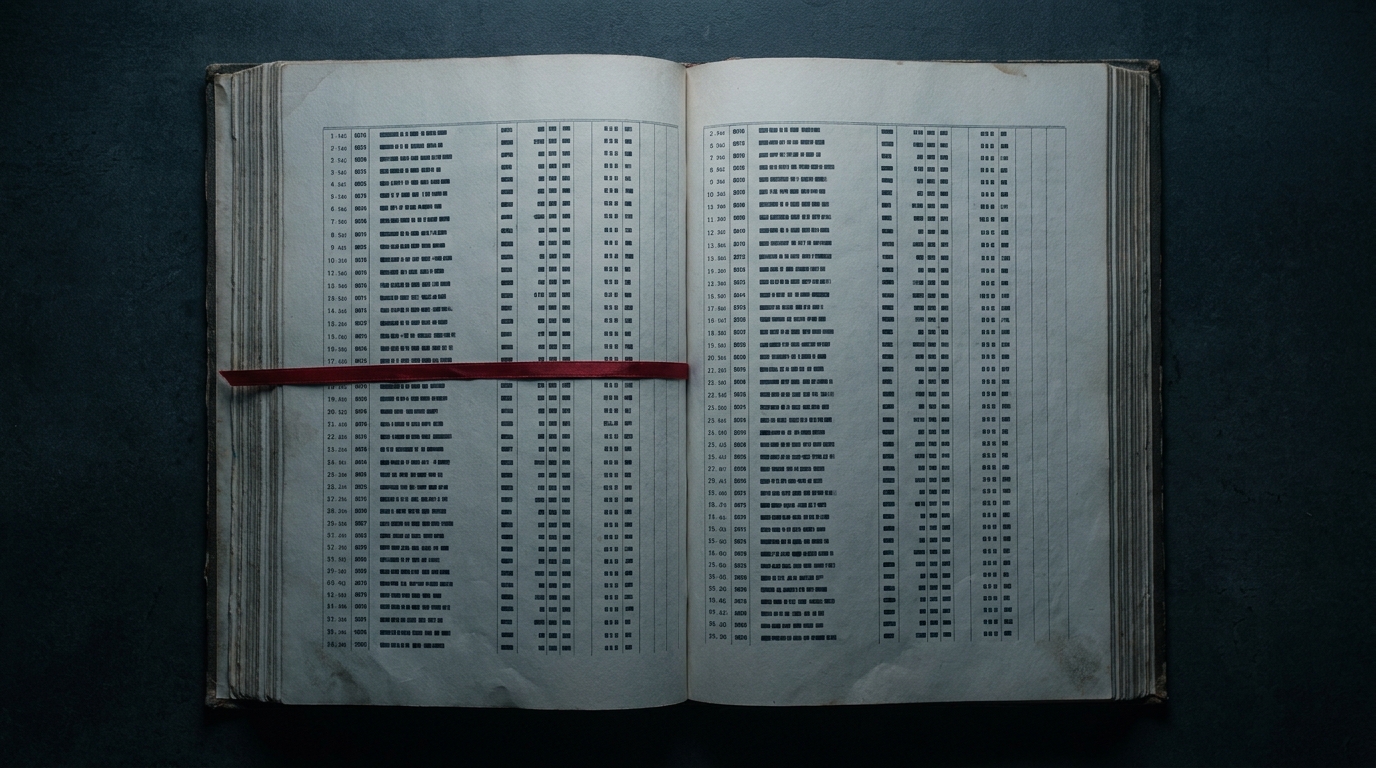

The audit trail you think you have

The conventional approach to audit trails for production AI is log-and-reconstruct. Application code is instrumented with logging statements. Logs are shipped to a central store. At audit time, an engineer queries the log store, joins records across services by timestamps or request IDs, and reconstructs what happened.

This works at small scale. It breaks at production scale, and it breaks worse for AI than for traditional applications, because the operationally significant data is scattered: the prompt template is in the application code repository, the retrieved documents are in the vector database, the model version is in the inference layer, the post-processing logic is somewhere downstream, and the final decision is in the user-facing service. Joining all of that at audit time is a forensic project, not a query.

There are also subtler failures. Logs from different systems often have different time bases, so causal ordering across services has to be inferred rather than recorded. Logs can be lost or rotated. A compromised system can modify its own logs without anyone noticing. The granularity of what gets logged was set when the application was first written, and rarely matches the granularity of the questions an auditor will eventually ask. The cost of reconstruction-based auditing is hidden until something specific is asked. Then it becomes the only thing on the team’s roadmap for a week. We have watched this happen often enough to believe the right time to address it is before the question is asked.

What Temporal contributes

Temporal is a durable execution engine. Workflows are written as ordinary code; the runtime persists the execution. State survives crashes. Activities are replayable. Every input to every activity is recorded, every output is recorded, and the workflow itself is versioned — so when the code changes, in-flight executions continue under the version they started with while new executions use the new version.

For an AI workflow, this property is transformative. When you orchestrate model inference, retrieval, post-processing, and decision logic inside a Temporal workflow, every step is durably recorded with causal ordering preserved. The workflow history is the audit log. There is no separate logging system to maintain, no scraping at audit time, no question of whether the logs are complete. When an auditor asks what the system did for user X on date Y, the answer is a specific workflow execution with a complete event history.

A second property matters: replay-driven debugging. An engineer or auditor can take a specific workflow execution and replay it deterministically. If a decision turned out to be wrong, the team can examine exactly what the model saw, what context it retrieved, and what the intermediate steps produced. This is the difference between forensic investigation and looking at what happened.

What immutable data architectures contribute

Temporal gives you durable execution. Immutable data architectures give you durable inputs and outputs.

The pattern: instead of storing prompts, retrieval documents, model configurations, and decision artifacts in mutable database rows, store them as content-addressed objects, indexed by a cryptographic hash of their content. The audit record does not say “we used the latest version of the prompt template.” It says “we used the prompt template with hash 0x3a7f…” and that hash is independently verifiable. The same pattern applies to retrieval context. When the AI pulls documents from a knowledge base, the audit record captures the hash of each retrieved document, not just its ID. If the source documents are later updated — which they will be — the audit record still tells you exactly what the system saw at the time of inference.

Stacked together, these patterns yield an event-sourced AI decision log. Each inference event is an immutable record containing the hash of the prompt template, the hash of every retrieved document, the model identifier and version, the raw model output, the post-processing applied, the final decision, the confidence score, the refusal status if applicable, and a pointer to the workflow execution that produced the event. The record is cryptographically chained to the records before it, so modifications are detectable. When the auditor asks what the system did, the answer is a series of verifiable events, not a narrative built from logs.

The combination

The two patterns are useful separately. Their combination is where audit traceability stops being a feature and becomes a property of the substrate.

You get four things at once. Complete causal history: every step that produced a decision is recorded, in order, on a single time base. Replay-driven debugging: a specific execution can be re-run deterministically against the captured inputs. Tamper evidence: a compromised application cannot quietly rewrite its own history because the event chain is cryptographically verified. And regulatory readiness without a separate project: the same event store your engineers query for debugging is also the system of record for compliance.

That last property is the one we increasingly care about. The frameworks now emerging around high-consequence AI — OMB M-25-21 for federal use cases, model risk management guidance for banks, FDA’s evolving thinking on AI in medical devices, audit-trail integrity requirements in FISMA reviews — all demand evidence of continuous monitoring and AI impact assessment over time. If you have built audit traceability in, that evidence is already there. If you have not, you are building a compliance system on top of a system that was not designed to answer the questions, which is how teams end up shipping software whose audit story is durable in deck form and fragile in production.

Why we build it this way now

You can construct audit-traceable AI by retrofit or by construction. The retrofit approach — log scraping, audit-time reconstruction, periodic forensic analysis — satisfies a regulator who is not paying close attention. The by-construction approach satisfies a regulator who is.

The trajectory of regulation in regulated industries is one-way. The questions are getting more specific, not more general. The evidence accepted is getting more rigorous, not more lenient. The lessons that the regulated-software world learned over decades — that audit traceability designed in costs less than audit traceability bolted on, that immutable records are worth what they cost, that the question “what did the system do” needs an answer that does not require forensic reconstruction — apply directly to the AI generation of this work. If you are building AI for an environment where wrong answers have consequences, and where someone external to your team will eventually want to verify what your system did, build the audit traceability in. The engineering cost is modest, paid early. The cost of the alternative is also modest, until it isn’t.

About the author: Allen Elks is Partner and Chief Architect at IVC. IVC has been engineering software for regulated environments since 1987, with work spanning nuclear, financial services, healthcare, and energy. The firm’s AI engineering work is a more recent chapter — the architectural patterns described in this post are what we are now building on. Allen is a third-generation U.S. military veteran.